AIAAIC Alert #13

Foundation/frontier model transparency; Constitutional AI; Deepfakes in South Sudan; Amazon Alexa misinformation; New volunteers

AI, algorithmic, and automation trust and transparency

#12 | November 3, 2023

Foundation/frontier model transparency

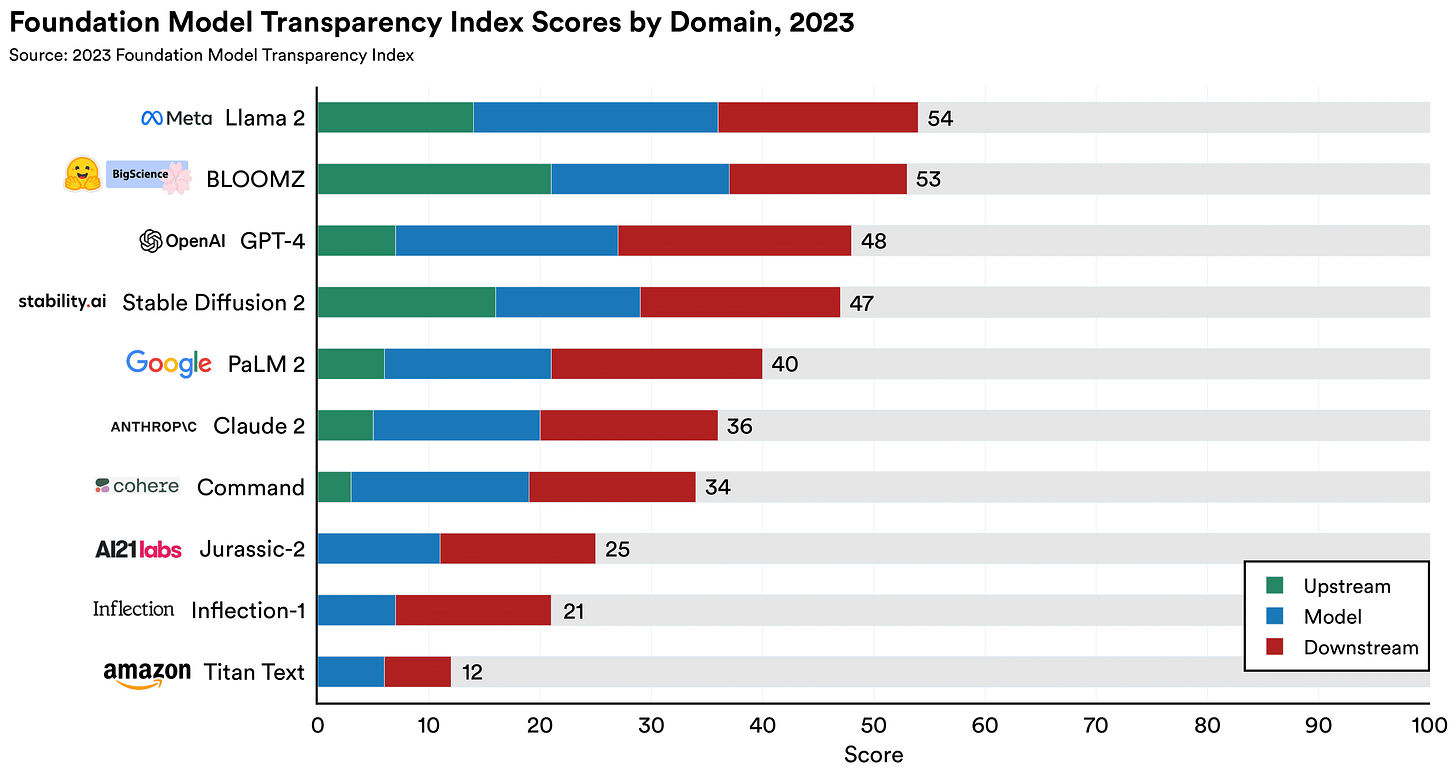

A rigorous if contested Stanford University index ranking the transparency of foundation models including GPT-4 and Anthropic’s Claude 2 reveals none score well, ‘revealing a fundamental lack of transparency in the AI industry.’

Perhaps unsurprisingy, the highest-scoring models - Meta’s Llama 2, BigScience’s BLOOMZ, and Stability AI’s Stable Diffusion 2 - were open source and released with model weights. Meantime, ‘closed’ developers were found to provide very little information on the data, labour, and compute used to build their models.

The researchers also revealed that every model studied fails to provide any information on the ‘downstream’ impacts of their models on individuals, sectors, and geographies.

Regrettably, this omission is conspicuously side-stepped in this week’s welter of governmental policy statements, which focus on potential risks rather than actual harms.

President Biden’s AI Executive Order requires that developers of AI systems seen to pose a ‘serious’ risk to national security, national economic security, or national public health and safety notify the US federal government when training their models.

The UK AI Summit’s Bletchley Declaration merely ‘encourages’ foundation model developers to ‘provide context-appropriate transparency and accountability on their plans to measure, monitor and mitigate potentially (italics added) harmful capabilities and the associated effects that may emerge, in particular to prevent misuse and issues of control, and the amplification of other risks.’

And the G7 Hiroshima AI Process Guiding Principles for Organisations Developing AI Systems (pdf) states developers are ‘encouraged (italics added) to consider [..] facilitating third-party and user discovery and reporting of issues and vulnerabilities after deployment.’

Real transparency will only be achieved when the actual harms of frontier, foundation and other high-risk AI models and systems are fully disclosed. Only then can regulators, auditors, users and others make informed decisions.

Recommended reads, listens, and watches

Research by the Ada Lovelace Institute found Brits have positive attitudes about the use of AI in health and science development, but are concerned about its use in decision-making that affects their lives, such as welfare benefit elegibility, and strongly support the protection of fundamental rights | link

An online deliberation process involving 1,000 Americans revealed that Anthropic’s ‘Constitutional AI’, developed by its employees to make its AI systems ‘harmless’, was less aligned with public opinion than it had assumed. And beyond the US? | link

Anthropomorphism is increasingly ‘designed-in’ to chatbots and other applications, resulting in the deliberate and systemic manipulation of consumers and citizens, according to a punchy report from US consumer advocacy group Public Citizen. This appears to be less of an issue in Japan and other east Asian markets. With the ‘east’ rising, will western views evolve? | link

Google Deepmind published a paper setting out the ins and outs of a socio-technical safety evaluation of generative AI systems. Useful, even if its harms taxonomy (p 30-31) is limited | link (pdf)

AI is not just turbo-charging disinformation, but eroding freedoms and enabling authoritaran governments in Myanmar and elsewhere to strengthen online censorship, according to US democracy advocacy group Freedom House. AI is providing convenient cover for government intervention limiting basic rights and freedoms | link

In aquasi-utopian screed, venture capitalist Marc Andreessen castigates AI existentialists, risk managers, ESG and tech ethics professionals and anyone else seemingly concerned about the unbridled development and use of technology for just about any purpose. No great surprise, but why has Andreessen chosen to mouth off in this way now? | link

AI and algorithmic incidents and controversies

New additions to the AIAAIC Repository during October 2023. All entries are categorised as Incidents unless otherwise stated. Click here for classifications and definitions.

Deepfake audio falsely depicts Barack Obama discussing conspiracy theory

AI campaign impersonates former South Sudan leader Omar al-Bashir

Defence lawyer using AI 'botches' criminal trial closing argument

Mike Huckabee books used to train language models without consent

Study finds Proctorio fails to detect student cheats (2021-22)

Instagram inserts ‘terrorist’ into Palestinians' biography translations

Guillermo Ibarrolla facial recognition wrongful arrest (2019)

Deepfake audio recording falsely depicts British Opposition Leader abusing staff

Proctorio fails to recognise Vrije Universiteit Black student (2022-23)

Miami University student accuses Proctorio of privacy abuse, bias (2020-23)

UBC academic, students accuse Proctorio of privacy abuse (2020-23)

Ugandan police accused of using facial recognition to stifle Museveni term protests (2020)

University of Miami accused of using facial recognition to track student protestors (2020)

Proctoring software companies accused of providing invasive, discriminatory software (2020)

YouTube videos target kids with AI false 'scientific' education content

Serbia Social Card excludes thousands of welfare beneficiaries (2022)

Israel AI robot machine guns fire tear gas at Palestinian protestors (2022)

Italian car insurers discriminate using place of birth (2012-22)

Visit the AIAAIC Repository for details of these and 1,100+ other AI, algorithmic and automation-driven incidents and controversies

Report an incident or controversy.

AIAAIC policy & research citations, mentions

Bommasani R., Klyman K., Longpre S., Kapoor S., Maslej N., Xiong B., Zhang D., Liang P. The Foundation Model Transparency Index (pdf)

Ariffin A.S., Kamaruddin N.H., Juhari M.R.M., Jamil E.M., Dolah R., Muhtazaruddin M. N. The Reliability, Safety, and Control Principle on Recognition of Facial Expression Through Deep Learning to Support Responsible AI Implementation Policy

Trần Khánh Lâm. Đạo đức nghề nghiệp kế toán: Xây dựng khung quy định trong thời đại công nghệ mới bùng nổ

Arora A. et al. Risk and the future of AI: Algorithmic bias, data colonialism, and marginalization

Ruttkamp-Bloem E. Intergenerational Justice as Driver for Responsible AI

Hao-Ping (Hank) Lee et al. Deepfakes, Phrenology, Surveillance, and More! A Taxonomy of AI Privacy Risks (pdf)

Groza A., Marginean A. Brave new world: Artificial Intelligence in teaching and learning

Digital Rights Foundation. The Machine is rigged – a deep dive into AI-powered screening systems and the biases they perpetuate

Common Sense. AI and Our Kids: Common Sense Considerations and Guidance for Parents, Educators, and Policymakers 2023 (pdf)

Explore research reports citing or mentioning AIAAIC.

AIAAIC in the news

Al-Masry Al-Youm. «القبض» على الذكاء الاصطناعى

Project Management Institute. Leading AI-driven Business Transformation: Are You In?

DirigentesDigital. Inteligencia artificial: estado actual, ética y legislación

More news and coverage mentioning AIAAIC

AIAAIC news

AIAAIC is delighted to welcome Ed Barnetson and Sahaj Vaidya to its ranks of volunteers.

A masters student at the University of Bristol with a focus on technology ethics and political philosophy, Ed is helping AIAAIC document the use of generative AI tools to create and spread mis/disinformation, undermine autonomy, and impact political processes.

Sahaj is a third-year doctoral student in at New Jersey Institute of Technology focused on ethical AI governance and data-driven decision-making. She is also on the management board of AI Education Network. Sahaj is starting by helping AIAAIC develop our new risks and harms taxonomy.