AIAAIC Alert #14

Generative AI governance; ChatGPT incidents; Risks harms taxonomy; New volunteers and research

AI, algorithmic, and automation trust and transparency

#14 | December 4, 2023

Transparency is about more than AI models

For years, the governance of AI developers, and particularly of generative AI developers, has been framed almost exclusively as a product/service issue.

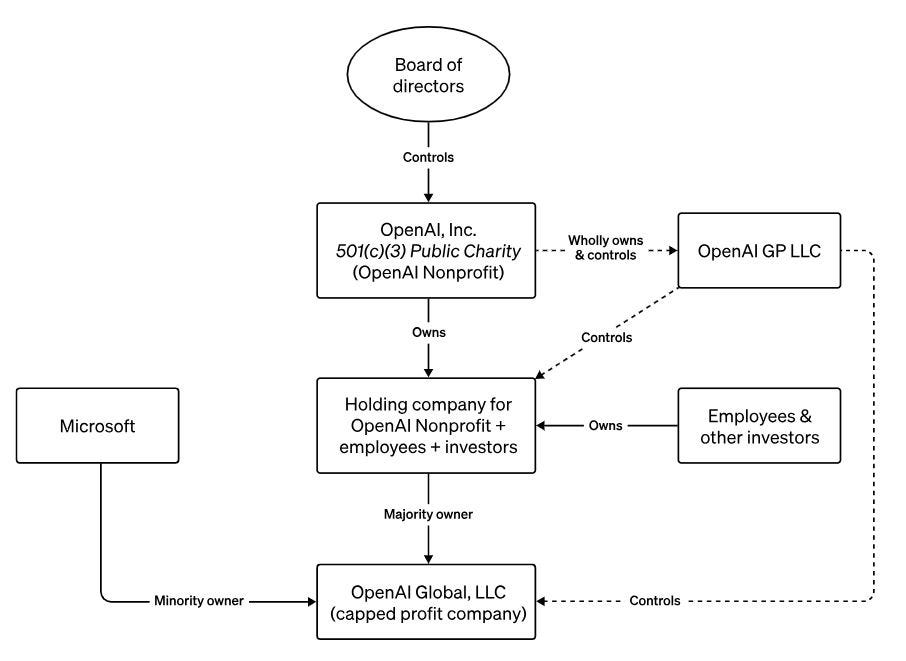

Few batted an eyelid about the unusual dual public benefit/for-profit governance structures of OpenAI, Anthropic, Inflection, and others. And little uttered about the convenient lack of transparency and hence accountability afforded by these structures.

But the unexpected firing of Sam Altman and the tumultuous events that followed resulted in the mission, structure, and governance of OpenAI’s being publicly raked over the coals. And the doubts are now spreading to Anthropic, which has largely managed to remain out of the spotlight.

Of course, generative AI firms are not legally obliged to disclose much as private or quasi-private entities. But it is striking how veiled they are given their stated public benefit missions.

Board members and their remuneration are often not disclosed, and information on board committees - should they exist - and their terms of reference are kept out of public view. Codes of conduct and ethics are conspicuously absent. Publicly stated environmental, sustainability, or supply chain policies, anyone?

Trust and trustworthiness are words never far from the lips of Sam Altman, Dario Amodei, Mustafa Suleyman et al, especially when they are in the company of politicians and journalists.

With the eyes of the world now rooted on them, Altman and co have an opportunity to show the general public that they are genuinely operating its best interests.

To what extent will they involve citizens and others in decision-making, and shed meaningful light on their companies’ real objectives, strategies, plans, policies, and processes?

Or will they opt to remain in their corporate fox holes?

Time will tell.

AI and algorithmic incidents and controversies

In this issue, we list ChatGPT-related additions to the AIAAIC Repository during November 2023.

News publishers complain OpenAI uses articles to train ChatGPT

Software engineers sue OpenAI, Microsoft for violating personal privacy

OpenAI sued for 'stealing' personal info to create ChatGPT, DALL-E

ChatGPT's ability to generate accurate computer code plummets

Immunefi bans 'inaccurate' ChatGPT-generated bug bounty reports

Australian researchers use ChatGPT to assess grant applications

AIs can guess Reddit users' age, location, and what they earn

Chatbot guardrails bypassed using lengthy character suffixes

Perth doctors warned for using ChatGPT to write patient medical records

ChatGPT writes code that makes databases leak sensitive info

Visit the AIAAIC Repository for details of these and 1,200+ other AI, algorithmic and automation-driven incidents and controversies

Submit an incident or controversy.

AIAAIC policy and research citations, mentions

Amidst a swirl of research papers and policy reports citing or mentioning AIAAIC over the past few weeks, we are pleased to highlight two new additions to the canon by AIAAIC volunteers.

McCombs School of Business PhD student John-Patrick Akinyemi, alongside Huseyin Tanriverdi and Terrence Neumann, published Mitigating Bias in Organizational Development and Use of Artificial Intelligence

Former UNDP technical advisor Alex Read authored A democratic approach to global artificial intelligence safety for the Westminster Foundation for Democracy, a primer for the UK’s AI Summit in November 2023

Explore more research reports citing or mentioning AIAAIC.

AIAAIC in the news

IFLA. Developing a library strategic response to Artificial Intelligence

Jose Renato Nalini, RegiaoHoje. Ela e Preconceituosa

中国信息安全, 安全内参. 生成式人工智能的安全风险及监管现状

More news and coverage mentioning AIAAIC.

AIAAIC news

Over recent months, AIAAIC has been developing a general purpose taxonomy of risks and harms driven by and relating to AI and adjacent technologies. Intended to help researchers, civil society organisations, and policy-makers hold AI developers and operators to account, and to improve end user and general public understanding, the taxonomy will be open and machine-readable. With the main ontology and primary risk categories now agreed, AIAAIC Alert subscribers are invited to join existing participants from universities, NGOs, the media and business to identify and define the harms and causes. Click here to get involved.

AIAAIC is pleased to welcome two new volunteers: Djalel Benbouzid, who brings over 13 years of experience in machine learning, with roles including researcher and data scientist to AI governance manager, latterly at Volkswagen Group. Also, Isabella Chan, a market researcher and brand strategist who brings an MsC in Criminology from the London School of Economics, an English Qualifying Law Degree from BPP Law School, and Bachelor of Arts in Sociology from Queen’s University, Canada.