New release: Taxonomy of AI, Algorithmic, and Automation Harms

Introducing A Collaborative, Human-Centred Taxonomy of AI, Algorithmic, and Automation Harms

Keep up with AIAAIC via our website | X/Twitter | LinkedIn

AIAAIC is delighted to share our new research paper on the harms of AI, algorithmic and automation systems.

VIEW/DOWNLOAD AIAAIC’s harms taxonomy research paper.

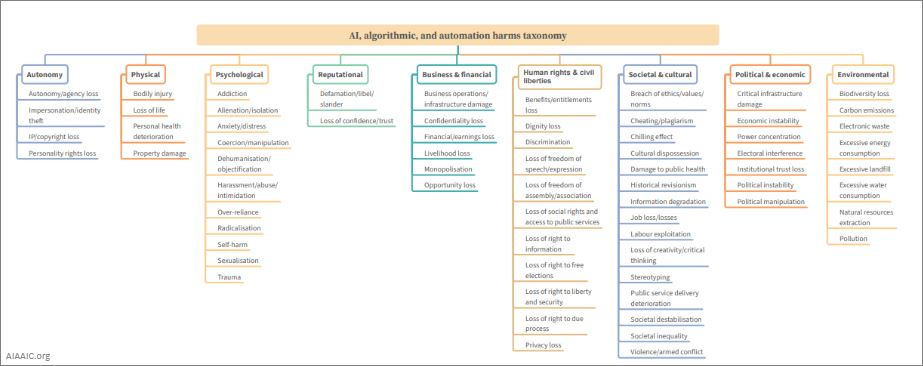

Based on the 1,500 (and counting) real-world incidents and controversies involving AI, algorithmic and automation systems indexed in the AIAAIC Repository, the taxonomy aims to equip researchers, civil organisations and the general public to better understand and take action on AI and related technologies harms and violations. Up until now, the general public has been largely left out of public policy and technology decision-making around AI and related technologies.

AIAAIC aims to change that. Our goal is one of transparency, accountability, inclusivity and empowerment. We aim to provide a means for a broader set of stakeholders to be involved in AI’s ethical and responsible development and this taxonomy forms a key plank in our efforts.

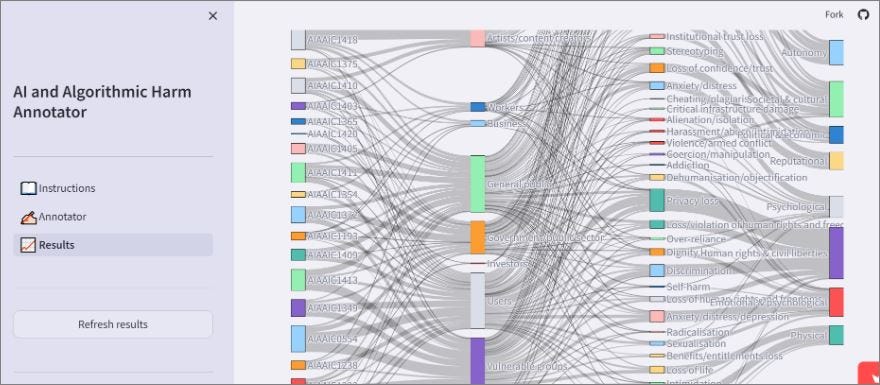

Developed in conjunction with a multi-disciplinary, global team of researchers, philosophers, lawyers, statisticians, economists, human rights, disinformation, computer science and other experts, the taxonomy is human-centered, flexible, and extensible, and has been rigorously tested using a custom harms annotation tool (see below) to be clear and understandable to a broad set of audiences.

The taxonomy will be further refined over the coming months in a structured and transparent manner involving a large, diverse group of individuals representing a broad range of interests and expertise, countries and cultures, ages and genders.

Get in contact if you have any comments or questions, or are interested in getting involved in phase 2 of the project.