AIAAIC Alert #18

Monthly round-up of goings-on connected with the AI, algorithmic and automation transparency, openess, and accountability

#18 | March 29, 2024

Keep up with AIAAIC via our Website | X/Twitter | LinkedIn

All those near-empty AI registries is creating a neat vacuum

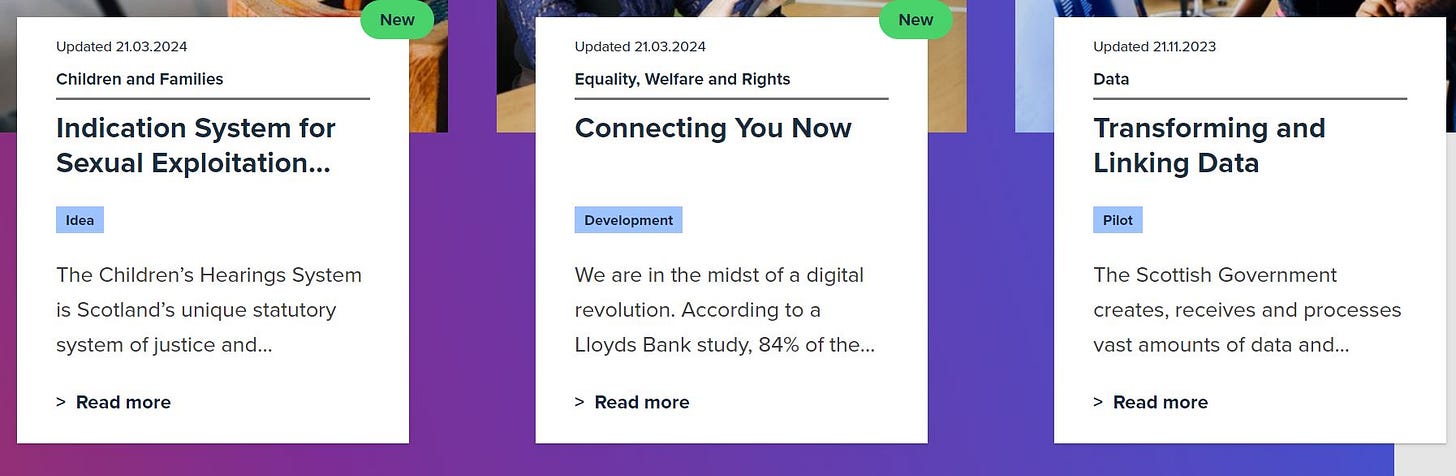

Scotland AI Register

Dialling back a few years, AI registries were something of a thing. Public sector bodies had been using AI and algorithms for years, complaints were rife, and politicians were asking questions.

But few end users (ie. citizens) had any real idea how they worked or understood the nature of their risks, assuming they knew they existed at all.

So the idea of building supra-national, national or city-level registries (eg. Amsterdam, Helsinki) of these systems seemed a positive one.

Pitched as a major step in transparency, openness and accountability, they were also heralded as a means for public authorities to normalise AI and develop trust.

But a quick glance at some of these efforts reveals that whilst the information provided is sometimes useful, albeit primarily to researchers and civil society organisations, the cupboards are often threadbare:

Helsinki’s AI registry details eight systems, despite having been launched in 2020.

Scotland’s AI registry lists a meagre three systems, none of which are live.

A new repository for public sector organisations in the UK using the the government’s (positively stringent) Algorithmic Transparency Recording Standard lists a grand total of seven systems across central, regional and local government.

Which begs the question: what’s the hold-up? Lawyers looking to reduce legal exposure? The slow grind of public sector bureaucracy? Civil servants grappling nervously with the vagaries of public accountability? Or their politicians bosses failing to drive policy or get into the policy weeds?

Whatever the reason, the longer the wait, and the thinner the information provided, the more likely that NGOs and others will take matters into their own hands by developing their own registries and forcing transparency and accountability from the outside.

For example, UK legal charity Public Law Project has created the Tracking Automated Government (TAG) Register that currently keeps track of 55 government automated decision-making systems. The Internet Freedom Foundation created the Panoptic Tracker of 170 facial recognition systems across India.

And there are plenty of others, some of which are listed here.

It is said that out of crisis comes opportunity. It also typically forces greater transparency.

In this vein, is it pure coincidence that the only model graded High transparency in the TAG Register is the UK government’s A-level grade allocation model, the use of which infamously resulted in skewed exam grades and students protesting outside Downing Street yelling ‘F*%! the Algorithm’?

In the crosshairs

Israel facial recognition system misidentifies innocent Gazans

A facial recognition system used by Israel in Gaza misidentified scores of innocent Palestinians, resulting in their abduction, interrogration, and physical beating. All’s fair in love and war?Google SGE recommends malware, fraud sites

Google's AI-powered Search Generative Experience (SGE) is reportedly recommending malicious websites, including ones containing malware and scams.Russian TV deepfake blames Ukraine for Crocus City Hall attack

A Russian TV station broadcast a synthetic video of Ukrainian officials, including top security advisor Oleksiy Danilov, allegedly admitting to orchestrating the Crocus City Hall terror attack in Moscow.Godfrey Lao, Coco Lee AI resurrections spark criticism

The unathorised AI recreation of deceased Taiwanese Canadian actor Godfrey Gao and Chinese American singer Coco Lee sparked debate about the legal implications of using a dead person’s name, image, voice and reputational likeness.Late Night with the Devil AI interstitials provoke backlash

Horror film 'Late Night with the Devil' used AI to create three still images that appear as brief interstitials. Humans saw their jobs at risk.University of Michigan partner sells student data for AI training

A partner to the University of Michigan tried to sell student data to organisations training AI models. Cue privacy controversy and partnership termination.NHS plan to AI generate patient notes draws criticism

The UK National Health Service said would introduce AI for automatically generating patient notes during medical appointments. Privacy experts blasted the plan as ‘creepy’.

Visit the AIAAIC Repository for details of these and 1,200+ other AI, algorithmic and automation-driven incidents and controversies

Report an incident or controversy.

Research citations, mentions

Xu, W. (2024). A New Design Philosophy: Human-Centered Artificial Intelligence

Anurag A.S. The Evolution of AI and Data Science

Williamson B. Molnar A., Boninger F. Time for a Pause: Without Effective Public Oversight, AI in Schools Will Do More Harm Than Good

McGrath Q., Hevner A.R., de Vreede G.J. Managing Ethical Risks of Artificial Intelligence in Business Applications

TenSquared Research. Web3 and Artificial Intelligence: The State of Play (pdf)

Wells Fargo. The ascent of generative AI - what investors should know

FTI Consulting. Bridging the Gap Between Artificial Intelligence Implementation, Governance, and Democracy: An Operational and Regulatory Perspective

Explore more research reports citing or mentioning AIAAIC.

AIAAIC in the news

AIAAIC founder Charlie Pownall is quoted in a Libération article on porn deepfakes and nudifiers (in French).

MIT visiting lecturer Irving Wladawsky-Berger cites AIAAIC in an article exploring the opportunities and challenges of open source AI.

Andras Laslo Tolgyescit encourages managers considering how to manage the risks of generative AI systems to visit the AIAAIC Repository to ‘understand the scope, type, and frequency of AI incidents’ (in Hungarian).

A prominent IT commentator in South Korea mentions AIAAIC when making the case for proper AI guardrails (in Korean).

Benzinga contributor Tom White mentions AIAAIC’s ethical focus when setting out how organsiations can balance innovation and privacy.

China Daily cites AIAAIC data in an in-depth analysis of the nature of AI on society.

MIAI Grenoble Alpes research fellow Cornelia Kutterer cites AIAAIC data in a detailed analysis of the regulation of AI foundation models under the EU AI Act.

Writing in Japanese business magazine Toyo Keizei, Accenture executive Manabu Hoshina cites AIAAIC when making the case for using ‘human wisdom’ in managing and using AI wise use of AI (in Japanese).

More news and coverage mentioning AIAAIC.

AIAAIC news

AIAAIC is delighted to welcome two new faces.

Tim Carter has joined AIAAIC to help develop our service offer and assist in the development of key partnerships. Tim brings significant experience managing technology and digital change programmes across multiple industry sectors since the mid-1990s.

Alice Poorta joins our editorial and data team, where she will focus on IP/copyright incidents and issues. Alice previously handled IP/copyright, stakeholder controls and monetisation models for YouTube's GenAI products, and was a member of Google’s AI Ethics Fellowship.

As noted above, many AI registries remain half-baked. AIAAIC has developed a new ‘system’ page template (eg. PimEyes, UK sham marriage triage tool; Worldcoin) intended to help publicly document the risks and harms these systems are seen to pose. A first step, we intend to keep pushing this concept forward - and we’d love to you to shape our efforts. Get in touch if you’d like to help.