AIAAIC Alert #23

A monthly round-up of goings-on connected with the AI, algorithmic and automation transparency, openness, and accountability

#23 | May 3, 2024

Keep up with AIAAIC via our Website | X/Twitter | LinkedIn

Safety is no substitute for responsibility

Image: Throwflame

Talk to pretty much anyone in tech industry and tech policy-making circles and discussions pretty much inevitably focus on innovation and ‘safety’. As in making systems safe from accidents, misuse, or other harmful consequences.

Safety sounds a comforting, fluffy word. But it is also one that means different things to different people, and is easily misconstrued and abused.

Consider the following from the last few days:

Ohio firm Throwflame launched the Thermonator, a robot dog with a flamethrower strapped on its back that is ‘ready for anything’ and can ‘deliver on-demand fire anywhere’. It can be bought at the click of a button, delivery is free across the US, and no pesky questions about who is buying it and what it is to be used for.

WIRED discovered tens of thousands of ads running in plain sight on Facebook and Instagram promoting sexually explicit ‘AI girlfriend’ apps running on Google’s Play Store, in violation of both companies’ adult content policies.

Austrian rights outfit noyb filed a complaint with Austria’s privacy watchdog against OpenAI triggered by ChatGPT’s failure to supply the correct birthday of a public figure, thereby potentially violating EU privacy law.

OpenAI, Microsoft, et al refused to share their models with the UK AI Safety Institute, despite public commitments to do so, according to a recent Politico report.

Companies in all industries are under pressure to talk a good safety game, lest they lose the trust of their users, regulators and others, and the backing of their investors.

But the pace of change and the potential riches, combined with the lack of legislation and substantive regulatory oversight, appears to have persuaded many AI firms and their financial backers that they can roll out products with scant regard for human values and norms, that are easily misused, and where foreseeable damage to individuals, society and the environment is regarded as little more than the price of entry.

Little surprise then that the AI industry prefers to focus on safety rather than responsibility or ethics. An automated flamethrowing dog may be touted as safe, in that it is unlikely to explode in its user’s face.

But can it be considered responsible or ethical to sell such a machine to all and sundry?

Support our work collecting and examining incidents and issues driven by AI, algorithms and automation, and making the case for technology transparency and openness.

In the crosshairs

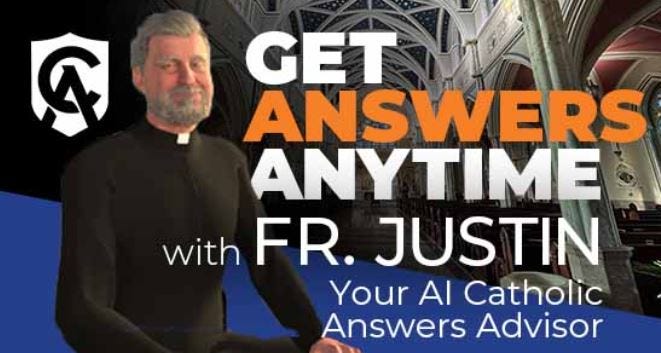

Image: Catholic Answers

Father Justin AI priest defrocked after inappropriate responses

AI priest Justin was downgraded to a lay character after repeatedly claiming it/he was a real member of the clergy. Not a great look for the Vatican and for a Pope busily making the case for the responsible use of AI.Grok accuses Klay Thompson of 'brick-vandalism spree'

Elon Musk’s libertarian chatbot turned a false story of basketball star Klay vandalising his house with bricks into a literal story of of Thompson vandalising houses with bricks. Klay is yet to respond publicly.Baltimore high school athletic director uses AI to smear principal

To get his own back on allegations that he misused schools funds, apparently.Drake threatened with lawsuit over AI-generated Tupac Shakur voice

The Canadian rapper pulled the track after he had been threatened with a lawsuit by fellow rapper Tupac Shakur’s estate. Drake’s real target: fellow rapper Kendrick Lamar, with whom he has ‘business’.DC Comics pulls AI-generated covers after backlash

The latest in a long series of media owners using AI to promote their wares and getting short thrift as a result. In this case, the publisher appears to have had the wool pulled over its eyes by one of its suppliers. Another example of supply chain mismanagement.Huawei P70 Ultra AI editing tool criticised for removing people's clothing

An object removal too far.

Visit the AIAAIC Repository for details of these and 1,450+ other AI, algorithmic and automation-driven incidents and controversies

Report an incident or controversy.

Research citations, mentions

Lee Dixon R.B., Frase H. An Argument for Hybrid AI Incident Reporting (pdf)

UK Competition & Markets Authority. AI Foundation Models - Technical update report

Ajaykumar S. et al. A Roadmap for AI Governance: Lessons from G20 National Strategies (pdf)

Wang A., Tan J., Murarka R., Sean Shu S. Xiong Lim Z. Enterprise-Industries Mapping of Gen AI Use Cases

Mishra A., Sinha S., George C. P. Shielding against online harm: A survey on text analysis to prevent cyberbullying

Torkamaan, H., Tahaei, M., Buijsman, S., Xiao, Z., Wilkinson, D. & Knijnenburg, B. The Role of Human-Centered AI in User Modeling, Adaptation, and Personalization—Models, Frameworks, and Paradigms

Ortega-Bolaños R., Bernal-Salcedo J., Germán Ortiz M., Galeano Sarmiento J., Ruz G.A., Tabares-Soto R. Applying the ethics of AI: a systematic review of tools for developing and assessing AI-based systems

Falconer J. Knowledge Management at a Precipice: The Precarious State of Ideas, Facts, and Truth in the AI Renaissance

Explore more research reports citing or mentioning AIAAIC.

AIAAIC in the news

AIAAIC was mentioned in an investor transparency campaign fronted by AFL-CIO Equity Index Funds requesting Panasonic Group to publish an annual transparency report on its use of AI | read (pdf)

The Leadership Conference on Civil and Human Rights’ VP KJ Bagchi cited AIAAIC in testimony to a US Commission on Civil Rights briefing on government use of facial recognition | read

AIAAIC data is used in a report by the Czech National Bank on the double-edged nature of artificial intelligence | read

University of Udine lecturer Giulia G. Cusenza writes in the Regulatory Review that she drew on the AIAAIC Repository to select and analyse US state and federal court decisions involving governmental use of AI | read

Lee Allison and Tara Boneta quote AIAAIC founder Charlie Pownall in an AI in Supply podcast detailing notable AI failures | listen

New Atlas mentions AIAAIC in its break-down of this year’s AI Index Report by Stannford HAI | read

To cap this newsletter off in style, we cannot but mention Tatler, which draws on AIAAIC incident data to tease readers about the disruptive nature of generative AI | read

More news and coverage mentioning AIAAIC.

AIAAIC news

AIAAIC is pleased to welcome Jyoti Prajapati, who joins as a volunteer. Jyoti is currently serving as a research associate with TEC, Department of Telecommunications, Government of India, focusing on the robustness assessment of AI systems. She was actively involved in developing India’s Fairness Assessment and Rating of Artificial Intelligence Systems standard.

April data on AIAAIC Repository usage is now available on our website. US visitors continue to top the charts, and most interest is in ChatGPT. Meantime, there has been a substantial rise in traffic from India, and increased interest in ClothOff, a controversial denudifier recently the subject of a Guardian investigation into its founders.

Following the introduction of our new AIAAIC Repository ‘System’ page template (example) - as mentioned in issue #18 of this newsletter - work has started rolling out our new Incident page template (example). Both templates are intended to make pages clearer and more readable and usable. As ever, we’d love to know your thoughts.