AIAAIC Alert #43

OpenAI's o1 selective disclosure; AI newscasters; FTC AI hype crackdown; Facebook political advertising; AI risk management and incident reporting papers; Harms taxonomy call for participation

#43 | September 27, 2024

Keep up with AIAAIC via our Website | X/Twitter | LinkedIn

High on reasoning, highly selective on transparency

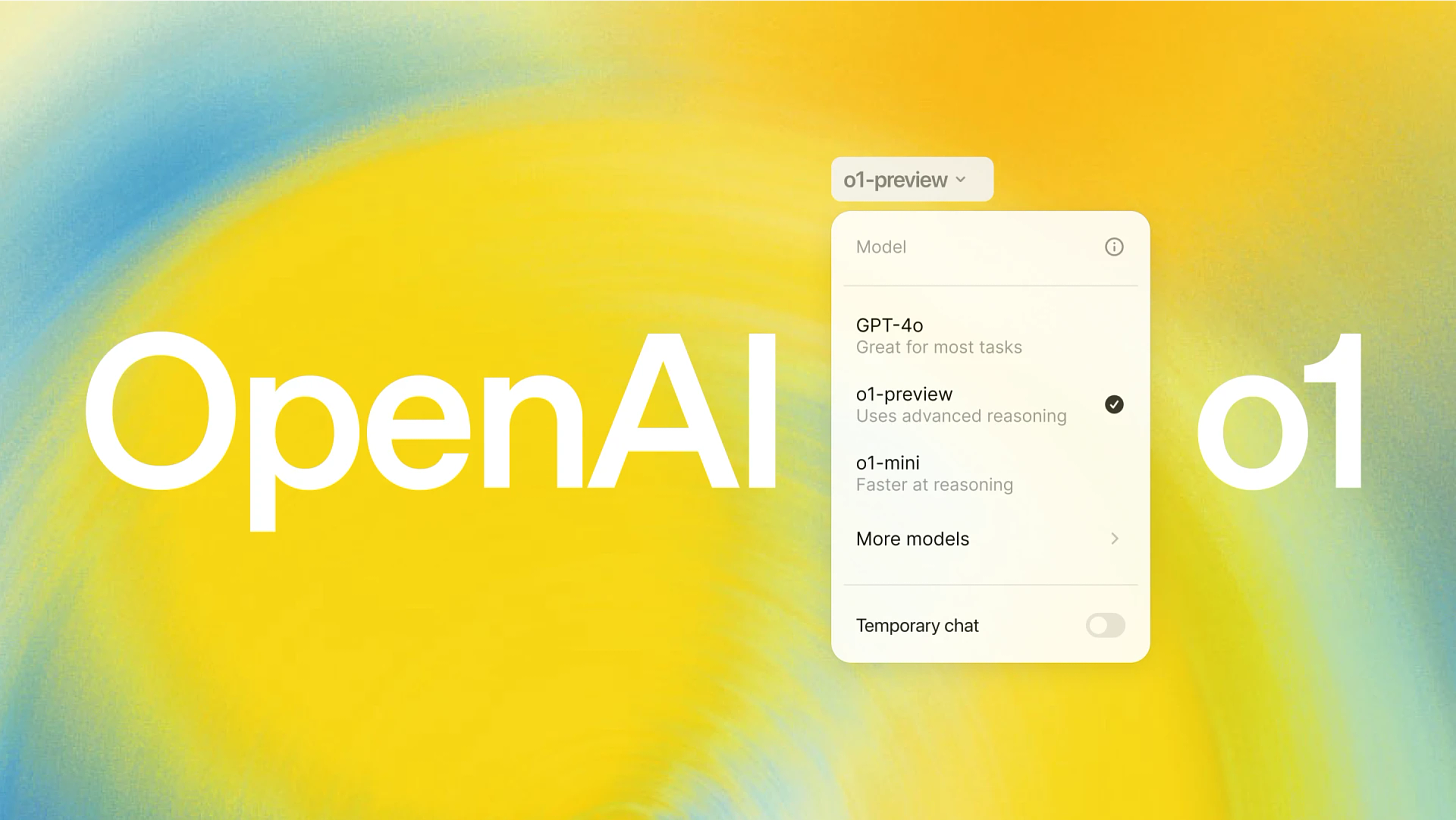

OpenAI’s new suite of o1 models may be smart at reasoning and problem solving. But its creator - in the news this week for finally acknowledging that it and its CEO are in it for the money as opposed to “benefiting all of humanity” - has kept quiet about some important aspects of the model’s development and impact.

Designed for complex reasoning tasks, especially in science and maths, o1 uses a "chain-of-thought" approach to process information step-by-step before responding, similar to how humans solve problems.

According to OpenAI, the model correctly solved 83 percent of International Mathematics Olympiad problems, compared to only 13 percent solved by its predecessor, GPT-4o. o1 also reportedly performs similarly to PhD students on challenging benchmark tasks in physics, chemistry, and biology. In coding contests, o1 reached the 89th percentile in Codeforces competitions.

All of which sounds technically excellent. But capabilities as powerful as these also prompted concerns about its impact on critical thinking, education and the future of coding. Ominously, the likes of Yoshua Bengio and the Center for AI Safety’s Dan Hendrycks were prompted to express fears about o1’s capability for producing CBRN (chemical, biological, radiological, and nuclear) weapons, and about OpenAI’s apparent increasing willingess to release increasingly risky models.

OpenAI’s o1 system card (pdf) contains some detailed information about the model’s reasoning capabilities and certain aspects of its safety, including its ability to create biological threats - which it ranks as a Medium risk on the basis that biological weapons experts “already have significant domain expertise”.

This sounds reasonably plausible; surely anyone can find information on how to make biological weapons on the internet if they look hard enough. But the exact way the risk ranking was calculated has not been revealed, begging more questions than answers, not least, why not? Surely it is in the public interest to reveal the inner workings of the ranking?

Information on two other aspects of o1’s development - both firmly in the public eye at the moment - are also conspicuously thin.

First, precisely what data was o1 trained on? Here’s what OpenAI has to say - and it is unlikely to satisfy content owners whose data is being constantly swept up in OpenAI’s ongoing scraping activities.

And then there are the environmental impacts - including water consumption, power consumption and carbon emissions - of the model’s training, or its estimated downstream impacts, about which OpenAI utters not a word.

OpenAI’s reluctance to be open about o1’s data sources is understandable - though not excusable - given the controversy over fair use.

But what’s the reason for OpenAI’s failure to reveal any environmental information about the model? That o1 spends more time “thinking” and generating more text requires a good deal more computing power than other generative AI models - which can hardly be considered environmentally friendly.

As such, OpenAI’s o1 system card should be considered as little more than a cosmetic exercise in highly selective disclosure.

Author: Charlie Pownall, Founder and Managing editor, AIAAIC

Support our work collecting and examining incidents and issues driven by AI, algorithms and automation, and making the case for technology transparency and openness.

In the crosshairs

AI and algorithmic incidents hitting the headlines

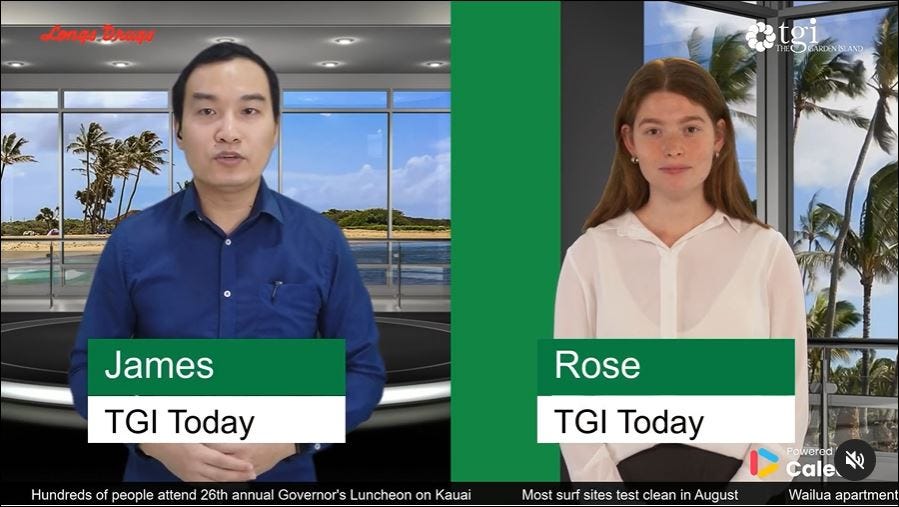

Image: The Garden Island

Hawaii newspaper replaces journalists with AI newscasters

Which didn’t go down at all well with, err, journalists, unions, readers/viewers, politicians and just about everyone else, pointing to a fundamental misalignment between the strategic vision of the paper’s owners (which appear to change every few months) and its tech solutionist Israeli supplier and everyone else’s expectations and requirements. Where does The Garden Island go from here?Edinburgh Airport accused of "arbitrary" AI-generated parking pricing

Some prices are generated by a dynamic pricing systems, others by humans. But airport management won’t say which, raising questions not just about the algorithm but also about the airport’s transparency and accountability.US FTC cracks down on DoNotPay "robot lawyer"

For deliberately misleading consumers about the capabilities of the bit, including claiming that it could analyse a business website for law violations based solely on the business’s email address. Hmmm.Google's AI Overviews recommends parents smear human faeces on balloons

AI Overviews needs some potty training of its own.AI chatbot persuades woman to euthanise her dog

User naivety meets anthropomorphism-by-design.Facebook fails to block thousands of misleading political ads

A Meta spokesperson claimed that their systems had removed “nearly all” the cited ads “at the time” they were run. Who to believe?Generative AI pollutes, terminates human language use project

AI enshittification spells the end for Robyn Speers’ Wordfreq investigation into the frequency of natural language usage.

Report an incident or controversy.

Citations & references

Indian government researchers analyse aviation, cybersecurity and fire safety incident reporting mechanisms, as well as existing AI incident databases, to propose a formalised process for AI incident reporting for critical infrastructure, thereby enabling data collection and analysis, mitigation and regulation. | Read paper (IEEE) $

A gang of Malaysian and Indian researchers developed a framework for AI risk management using a knowledge graph that stores and manages information on risk management, the AI life cycle, and stakeholder involvement, whilst adhering to established standards. | Read paper (pdf)

Researchers Fernanda dos Santos Rodrigues Silva and Tarcízio Silva argue that critical race theories are essential lenses for the ethical future of AI in Brazil. They go on to make the case for regulation that effectively mitigates discriminatory biases. | Read paper (in Portguese)

The founder of Spain’s OdiseIA AI observatory examines the “societal emergency” of AI incidents and sets out what should be done about it. | Read paper (pdf)

Explore more research citing or mentioning AIAAIC.

AIAAIC news

Harms taxonomy. AIAAIC has started the second phase of our harms taxonomy project, with aim of making the current classifications clearer and more exhaustive, and the taxonomy as a whole inter-operable with other systems. The next iteration will be released early 2025 and will also underpin the next version of the AIAAIC Repository. People from different regions, backgrounds and areas and levels of expertise are welcome to take part. Apply to participate

Charles Cai joins AIAAIC. Charles Cai is a technical architect/technology leader who has led major digital transformation initiatives in investment banking, energy trading, retail and clean energy in London and Shanghai. Recently, ChatGPT and GenAI technologies accelerated his work on cloud data consolidation and machine learning and data insights mining for a global payment company and, recognizing the potential and risks associated with AI and GenAI he began building an open-source, transparent AI agent platform. This led him to discover AIAAIC, where he is helping develop our next tech platform. Charles strongly believes in the importance of AI transparency.

See media coverage mentioning AIAAIC.

From our network

What advisors, contributors and others in AIAAIC’s network are up to

Recent papers by AIAAIC contributor Professor Paolo Giudici and colleagues at the University of Pavia, Italy, and the National University of Singapore:

The growth of AI applications requires risk management models that balance opportunities with risks. The researchers propose a "Rank Graduation Box" of integrated statistical metrics that can measure the Sustainability, Accuracy, Fairness and Explainability of AI applications unpinned by a common underlying statistical methodology: the Lorenz curve. | Read paper

Fairness and explainability are considered essential to the trustworthiness of AI credit rating trustworthiness, but are rarely analysed in tandem. The researchers propose a general post-processing methodology for credit ratings using machine learning models applied to a panel dataset of 119,857 credit records for approximately 20,000 small and medium-sized enterprises (SMEs) in four European countries and eleven industry sectors from 2015 to 2020. | Read paper